Mayank Patel

Apr 7, 2025

4 min read

Last updated Jan 8, 2026

When launching a new product—whether it’s a fresh seasonal drop, a limited-time collaboration, or a completely new SKU—you often face the same core problem: no historical data. No prior sales patterns. No customer behavior data. No previous forecasts to lean on.

But decisions still need to be made—about inventory, pricing, marketing, and fulfillment. This guide breaks down how to approach these zero-data SKUs using a blend of structured thinking, smart proxies, early signals, and adaptive systems.

Even without historical data, you can’t operate in a vacuum. Begin with assumptions built around:

These aren’t perfect—but they’re working hypotheses, and that’s better than flying blind.

Tip: Create a lightweight “SKU Assumption Template” where you log the category, price tier, expected marketing push, launch channel, and fulfillment method. Use this to compare similar past launches—even if the product is technically “new.”

When historical data for the SKU doesn’t exist, use similarity models. Look for analogs:

If your last collaboration with Artist X sold 500 hoodies in 3 days, your new drop might follow a similar trajectory—adjusted for changes like price point or season.

Also Read: Do Shoppers Love or Fear Hyper-Personalization?

The goal is to find patterns of performance from similar contexts, not identical products.

Use drops with similar:

If internal analogs don’t exist, tap external ones. It’s not perfect, but it's better than guesswork. Look at:

Pre-launch data can be a goldmine. Use it to adjust expectations before inventory locks in:

If you’re seeing stronger signals than previous launches, that’s your cue to up inventory. Weak signals? Dial it back or hold some units in reserve.

Also Read: Building a DTC Website vs Marketplace

The first 24–72 hours of a new SKU’s lifecycle provide real-time learning. Monitor:

Push this data into your ops + marketing teams daily. Don’t wait for the week to end. React fast. Example: If size M sells out in 12 hours but others linger, trigger a "Notify Me" form or restock email, and consider a limited pre-order run.

Also Read: Why Retail Tech Needs to Think in Probability, Not Certainty

For truly unpredictable SKUs, consider:

A tiered inventory strategy works well:

Backfilling also works—especially if you have agile manufacturing or local production relationships.

Also Read: Why Core Web Vitals Matter for B2B Commerce (and How They Drive Sales)

As you gather more launch data, your team should build a “zero-data SKU” forecasting toolkit. It should include:

This lets you run “what-if” scenarios. E.g., “If this new collab gets a 30k email push and 5 influencer posts, and performs like our last 2 hoodie drops, what should inventory look like?”

Keep refining these models with every launch.

After every new drop, run a retrospective. Document:

Save this in a “Drop Debrief” database. Over time, it becomes a playbook for handling future unknowns.

Also Read: How to Improve Your Shopify Store Conversion Rate %

Handling SKUs with no historical data is hard—but not impossible. You can make smart, proactive decisions by combining structured assumptions, proxy insights, early signals, and fast feedback loops.

The biggest mistake is treating these launches as “unpredictable.” They’re less predictable, yes—but with the right process, they can still be measurable, learnable, and improvable.

If you treat each new drop as both a launch and a test, your system will get sharper over time—and so will your outcomes.

Why Some Lead Form Fields Kill Conversions (And Which Ones Actually Help)

Most B2B funnels break at the form. Teams keep adding fields like company size, job title, phone number, and budget, hoping better data will improve lead quality. Instead, conversion rates drop and demo requests slow down. What started as a qualification step becomes a friction point. Every additional field increases effort, hesitation, or privacy concerns, quietly pushing legitimate prospects away before they ever submit the form.

Lead forms sit at the centre of the funnel, which creates a constant trade-off. Collect too little information, and sales receive unqualified leads. Collect too much, and potential customers abandon the form. The real challenge is knowing which fields genuinely improve qualification and which ones only create friction. This blog breaks down that difference and explains how to design forms that capture leads without hurting conversions.

Read more: Why Enterprise AI Fails and How to Fix It

Most long lead forms are not designed intentionally. They grow over time. The form becomes a place for data collection rather than a mechanism for moving prospects through the funnel. Understanding why teams add these fields is the first step to identifying which ones actually create value.

Read more: Executive Guide to Measuring AI ROI and Payback Periods

Most B2B teams design forms with a single objective: To improve lead quality. Additional fields are added to capture firmographic data, assess intent, or help sales prioritise outreach. Over time, the form becomes longer, the questions become more detailed, and the assumption remains the same: more information should produce better leads.

This is where the core trade-off emerges.

Understanding this trade-off helps teams evaluate whether a field actually improves decision-making or simply adds friction.

| Aspect | Qualification | Abandonment |

| Definition | The process of identifying whether a lead fits the company’s ideal customer profile or purchasing potential. | The point at which a user leaves the form without submitting it. |

| Purpose in the funnel | Helps sales prioritise leads and allocate time to higher-value opportunities. | Reduces the number of captured leads, weakening the top of the funnel. |

| Typical triggers | Fields like company name, job title, or company size that provide useful context for sales teams. | Long forms, sensitive questions, or complex dropdowns that increase effort or discomfort. |

| User perception | Users feel they are providing relevant information to request a demo or contact sales. | Users feel the form requires too much effort or asks for unnecessary personal or company data. |

| Impact on conversion rates | Moderate qualification fields may slightly reduce conversions but improve lead quality. | Excessive or poorly chosen fields significantly increase drop-off rates. |

| Design implication | Fields should only exist if they help a meaningful sales or routing decision. | Any field that does not influence decisions becomes unnecessary friction. |

Form abandonment happens when the form introduces friction that feels unnecessary or uncomfortable. Small moments of hesitation accumulate as the user progresses through the form. When the perceived effort becomes higher than the expected value, users exit the flow.

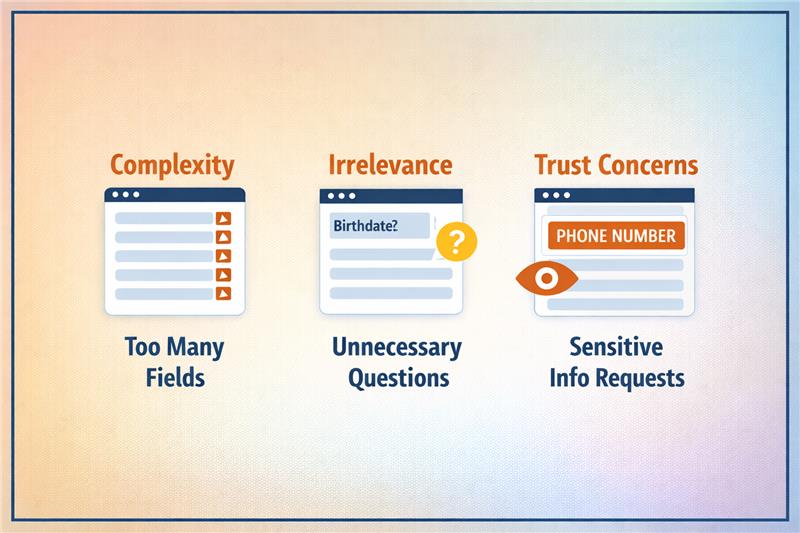

Three behavioural triggers typically drive this drop-off:

Some fields immediately create hesitation because users worry about how the information will be used. Questions that appear sensitive or intrusive increase perceived risk before trust is established.

Trigger: Fields such as phone numbers, revenue ranges, or personal contact details raise concerns about unwanted sales calls or data misuse, prompting users to abandon the form.

Certain questions require users to pause, think, or estimate information they may not know immediately. When a form demands too much mental effort, the completion process slows down.

Trigger: Complex dropdown menus, unclear categories, or questions like company revenue or employee ranges increase cognitive load and discourage users from finishing the form.

Some information is useful later in the sales process but appears too early in the initial conversion step. When advanced qualification questions appear prematurely, users feel they are entering a long evaluation process.

Trigger: Asking detailed requirements, budget ranges, or implementation timelines during the first interaction creates friction because the user has not yet committed to deeper engagement.

The goal is not to eliminate qualification from the form but to focus on fields that deliver decision value without creating unnecessary resistance. When forms prioritise these signals, teams gain useful context while keeping the submission experience manageable for the user.

Read more: How to Deploy Private LLMs Securely in Enterprises

Identifying and removing the right form fields reduce friction while maintaining the information that genuinely supports qualification.

Read more: How to Deploy Private LLMs Securely in Enterprises

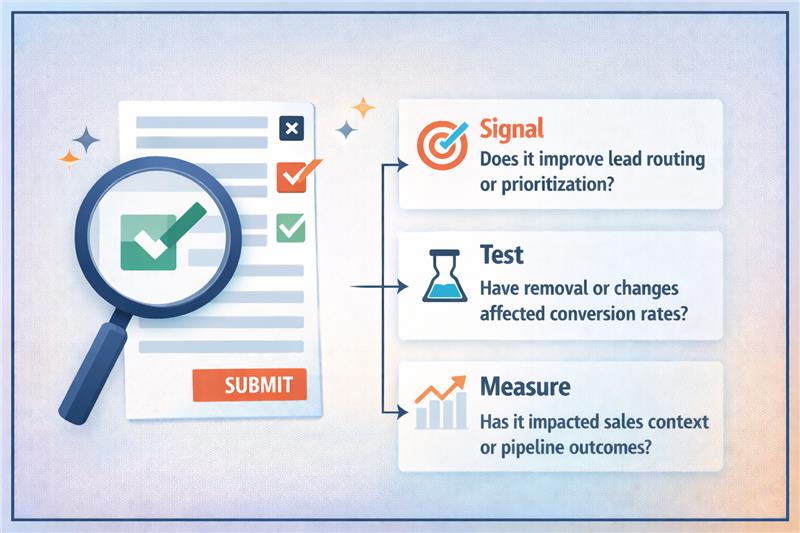

The most practical approach is to assess every form field through a signal versus friction lens. Signal represents the decision value the field provides, while friction represents the effort or hesitation it introduces for the user. When teams analyse fields using this framework, it becomes easier to separate necessary qualification questions from unnecessary data requests.

Read more: Modern AI Data Stack Architecture Explained for Enterprises

The objective is to understand how each field affects both conversion behaviour and downstream pipeline outcomes. This requires measuring not only form completion rates but also how those leads progress through the sales process. When testing is done carefully, teams can improve conversion rates without sacrificing qualification quality.

Read more: Personalization vs Borad UX Changes in Conversion Rate Optimization Services

Lead forms should capture decisions, not excess data. Every field must justify its presence by improving routing, prioritisation, or sales context. When forms collect information that does not influence these decisions, friction increases and conversion rates drop. The most effective funnels focus on a small set of high-signal fields that capture intent without slowing users down.

Improving forms requires a disciplined approach: evaluate each field for signal, test changes carefully, and measure both conversion rates and downstream pipeline outcomes. When designed correctly, forms become a fast entry point rather than a barrier. If your funnel is struggling with form friction or qualification trade-offs, Linearloop helps teams design and optimise conversion flows that improve both lead capture and pipeline quality.

Mayur Patel

Mar 11, 20266 min read

How to Optimise Demo Request Flows Without Disrupting Sales Infrastructure

Experimenting with demo request flows is risky for most B2B teams. A small change to a form can break lead routing, override territory rules, double-book SDR calendars, or corrupt CRM records. Since demo requests trigger multiple operational systems at once, many teams avoid testing entirely. This results in high-intent conversion points remaining untouched, even when conversion rates could clearly improve.

Yet demo request forms sit at the most valuable moment in the funnel, when a visitor is ready to talk to sales. Improving this step can directly increase the qualified pipeline. The challenge is running experiments without disrupting routing logic, territory ownership, or calendar availability. This blog explains how teams can test demo request flows safely while keeping their sales infrastructure intact.

Read more: Personalization vs Borad UX Changes in Conversion Rate Optimization Services

Demo request flows sit directly on top of sales infrastructure. The moment a visitor submits a demo request, multiple operational systems activate simultaneously. Because these systems depend on specific fields and routing logic, even small changes to the form can break downstream processes.

Read more: Modern AI Data Stack Architecture Explained for Enterprises

Experimenting with demo request flows can easily disrupt sales operations. These forms sit at the junction of marketing and sales infrastructure, triggering routing engines, CRM records, and scheduling systems simultaneously. When teams modify form fields, qualification logic, or scheduling steps without considering these dependencies, operational failures appear quickly. Leads may route incorrectly, ownership rules can break, and booking flows can fail before a meeting is even scheduled.

The most common issue is incorrect lead assignment. Routing systems rely on specific inputs such as geography, company size, or industry. If experiments remove or change these fields, leads can bypass routing rules and land with the wrong representative. Territory conflicts follow, especially in organisations with strict regional ownership.

These failures affect more than operations. SDR teams experience overloaded calendars or missed follow-ups. CRM data becomes inconsistent when records map incorrectly or duplicate entries appear. Pipeline reporting also suffers because demo requests may not be attributed properly to campaigns or sales teams. Revenue forecasts, conversion analysis, and performance tracking become unreliable. The solution is designing tests that respect routing logic, territory ownership, and sales infrastructure dependencies.

Read more: How to Deploy Private LLMs Securely in Enterprises

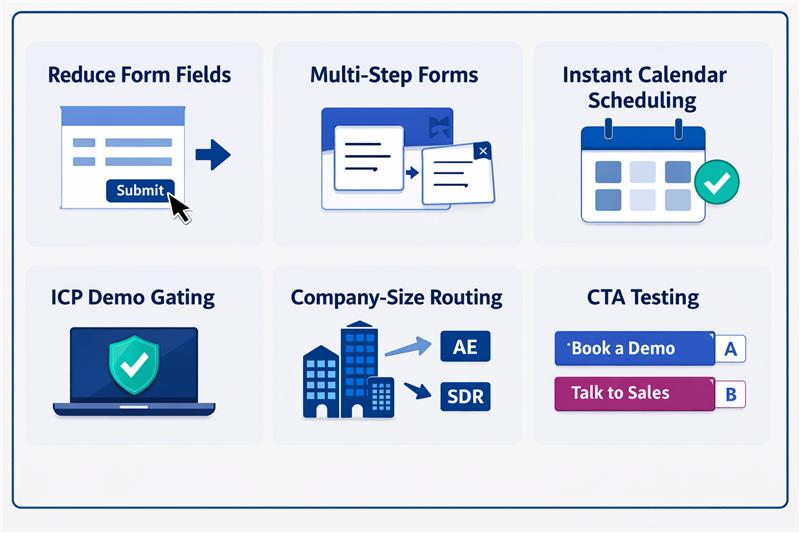

Teams often identify friction in demo request flows but hesitate to experiment because these forms sit on top of critical sales infrastructure. Even small UI changes can affect routing rules, territory ownership, or scheduling logic. Many CRO ideas can improve conversions, but if implemented without operational safeguards, they can disrupt CRM workflows and sales execution.

| Experiment | What changes | Conversion upside | Operational risk |

| Reduce form fields | Remove fields like company size or industry | Lower friction, higher submissions | Routing rules lose required inputs |

| Multi-step forms | Break long forms into steps | Higher completion rates | Partial data can break routing or CRM mapping |

| Instant calendar scheduling | Show rep calendars immediately | Faster meeting booking | Wrong routing exposes incorrect calendars |

| ICP demo gating | Allow scheduling only for qualified leads | Higher lead quality for sales | Qualification logic can conflict with routing |

| Company-size routing | Route enterprise leads to AEs | Faster sales response | Incorrect data misroutes territories |

| CTA testing | “Book a demo” vs “Talk to sales” | Higher click and submit rates | Intent signals may disrupt qualification workflows |

Read more: RAG vs Fine-Tuning: Cost, Compliance, and Scalability Explained

Demo request flows should be treated as sales infrastructure. The safest way to experiment is to separate the experimentation layer from the operational layer that controls routing, territories, calendars, and CRM workflows. When these layers remain independent, teams can test improvements without disrupting sales execution.

Routing systems depend on structured data fields to determine ownership, territory assignment, and follow-up workflows. Experiments should never remove or corrupt the inputs these systems require.

Reducing form friction is a common experiment, but routing systems still require company-level data. Enrichment allows teams to shorten forms while preserving operational inputs.

Running experiments across all traffic increases operational risk. Limiting tests to defined segments helps isolate potential failures without affecting the entire pipeline.

Build routing safeguards before running tests

Operational safeguards ensure leads continue to reach sales teams even if an experiment fails or routing logic behaves unexpectedly.

Monitor operational metrics

Demo flow experiments should not be judged solely on form conversion performance. Operational stability and sales efficiency must also be monitored.

Read more: Executive Guide to Measuring AI ROI and Payback Periods

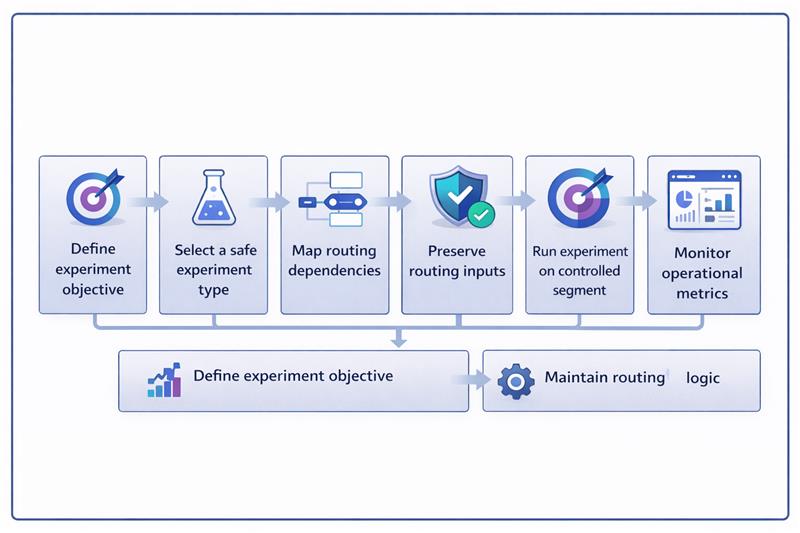

Running experiments on demo request flows requires a controlled workflow. The experiment should modify the user experience while keeping the routing, CRM mapping, and calendar systems unchanged.

The example below shows how a team tests a multi-step demo form while preserving routing inputs through enrichment and keeping backend assignment logic intact.

Read more: Why Enterprise AI Fails and How to Fix It

Demo request flows are deeply integrated with sales infrastructure. Routing engines, territory ownership rules, CRM workflows, and SDR calendars all depend on the data these forms generate. This is why many teams avoid experimentation altogether. The real challenge is how to experiment without disrupting the systems that turn demo requests into a pipeline.

When experimentation is separated from routing logic, teams can safely optimise these high-intent conversion points. Preserving routing inputs, using enrichment, running controlled experiments, and monitoring operational metrics allow improvements without operational risk. If your team wants to improve demo conversion without breaking sales systems, Linearloop helps design experimentation frameworks that protect routing logic while enabling continuous optimisation.

Mayur Patel

Mar 9, 20266 min read

Personalisation vs Broad UX Changes in Conversion Rate Optimization Services

Most digital teams today are under pressure to optimise experiences faster. Personalisation often becomes the default response. Marketing teams want segment-specific messaging. Product teams push for behaviour-based interfaces. CRO teams experiment with targeted variations for traffic sources, devices, and user types. But this quickly creates a new problem: too many variants, fragmented analytics, and unclear optimisation priorities.

At the same time, many performance issues are not segment-specific. Poor checkout flows, weak value propositions, slow pages, or confusing onboarding affect all users. Instead of fixing the core experience, teams often jump directly to personalisation because modern experimentation tools make it easy. This creates tension between two competing approaches: Improving the experience for everyone or creating targeted experiences for specific segments.

The real question optimisation teams should ask is simple: When is personalisation actually justified? What evidence should exist before you move from broad improvements to segment-level changes? This blog answers that question by outlining when personalisation makes sense and the data signals you should require before implementing it.

Read more: How Linearloop Built a Zero Loss ERP for a Gold Refinery: Gold VGR ERP Case Study

Many optimisation teams struggle with a recurring problem: declining conversion rates or inconsistent user behaviour across traffic segments often push them toward personalisation as the immediate solution. In experimentation and CRO, personalisation refers to delivering different experiences to different user segments based on attributes such as traffic source, location, device type, or behavioural history. Instead of showing the same interface to every visitor, teams create targeted variations.

However, personalisation is frequently misunderstood and applied too early in the optimisation process. Broad UX improvements address problems that affect the entire user base, while personalisation targets specific segments with different experiences. The problem is that many teams skip fixing the core experience and jump directly to segmentation because experimentation tools make personalisation easy to implement, which leads to unnecessary complexity and fragmented insights. Understanding this distinction is critical before deciding when personalisation is actually justified.

Read more: Modern AI Data Stack Architecture Explained for Enterprises

Before introducing personalisation, teams must first determine whether the problem affects the entire user base or only specific segments. The distinction is operationally important because the two approaches differ significantly in scalability, complexity, and long-term maintainability.

| Dimension | Broad experience changes | Personalisation |

| Core concept | Improves the core product or website experience for all users. One improved version replaces the existing experience universally. | Delivers different experiences to different user segments based on attributes such as behaviour, device, location, or traffic source. |

| Optimisation objective | Fixes structural usability issues affecting the majority of users. Focus is on improving the baseline experience. | Addresses behavioural differences between segments where the same experience does not perform equally well. |

| Typical examples | Simplifying checkout flows, improving page speed, clarifying product value propositions, reducing form friction, improving navigation. | Custom messaging for paid traffic, simplified flows for mobile users, returning-user shortcuts, location-based offers or pricing signals. |

| Scalability | Highly scalable because the improvement applies universally and requires minimal ongoing management. | Less scalable because each segment variation must be built, tested, maintained, and monitored separately. |

| Operational complexity | Lower complexity. Fewer variants mean easier experimentation, deployment, and quality assurance. | Higher complexity. Multiple variations increase testing overhead, QA requirements, and deployment coordination. |

| Analytics interpretation | Easier to measure impact because the entire user base experiences the same change, simplifying attribution and analysis. | Harder to interpret results because multiple segments behave differently and results must be analysed separately. |

| Long-term maintenance | Minimal maintenance once implemented because the experience remains consistent across users. | Ongoing maintenance required as segment logic, experiments, and experience variations evolve over time. |

Read more: From Manual Coordination to Automated Logistics: Sarthitrans Case Study

Many experimentation programmes lose effectiveness because teams introduce personalisation too early in the optimisation process. Instead of identifying whether a problem affects the core experience, teams immediately begin segmenting users and launching targeted variations. Understanding why teams fall into this pattern is critical before deciding when personalisation is actually justified.

Read more: Instream Case Study: Modernizing a Legacy CRM Without Downtime

Personalisation should never be implemented based on assumptions or isolated behavioural signals. The following evidence types help determine whether personalisation is justified or whether broader experience improvements will deliver better results.

Teams must first establish whether a segment consistently performs differently from the overall user base. This requires analysing conversion metrics across meaningful cohorts such as device types, traffic sources, new versus returning users, or geographic groups.

Even when segment differences exist, teams must confirm where the behavioural gap occurs. Funnel analysis helps identify whether a segment experiences friction at specific stages of the journey.

Segmentation insights alone are not sufficient to justify personalisation. The hypothesis must be validated through controlled experimentation to confirm that a tailored experience actually improves performance for that segment.

Even when experiments show improvement, teams must evaluate whether the benefit outweighs operational complexity. Personalisation introduces additional variants that increase development, QA, and analytics overhead.

Read more: How to Deploy Private LLMs Securely in Enterprise

Without a clear evaluation process, teams either introduce personalisation too early or overlook problems that affect the entire user base. The following framework helps teams decide when personalisation is justified.

Read more: RAG vs Fine-Tuning: Cost, Compliance and Scalability Explained

Personalisation can improve digital experiences, but only when it is applied with clear evidence. Many optimisation programmes lose effectiveness because teams introduce segmentation too early instead of fixing problems in the core experience. Most performance issues affect the majority of users and should be addressed through broad improvements before introducing segment-specific variations.

The right approach is evidence-led optimisation: analyse segment behaviour, validate with experimentation, and implement personalisation only when the data proves it is necessary. Teams that follow this discipline build simpler, more scalable optimisation programmes with clearer insights. If you are building experimentation systems or data-driven optimisation strategies, Linearloop helps design the architecture, experimentation frameworks, and data foundations required to make these decisions reliably at scale.

Mayur Patel

Mar 6, 20266 min read