Mayur Patel

Mar 13, 2026

5 min read

Last updated Mar 13, 2026

Growth teams run constant experiments, celebrate higher conversion rates, and report improvements in form completions, demo requests, or trial sign-ups. Yet leadership often sees a different reality: revenue growth slows, pipeline quality stagnates, and profit margins remain unchanged. The issue is not a lack of experimentation; it is that teams optimise the easiest metric to move rather than the metric that reflects real business value.

Conversion rate became the default optimisation metric because it responds quickly to experiments, is easy to attribute to UI changes, and fits neatly into A/B testing workflows. Over time, experimentation programmes began optimising behavioural metrics simply because they were measurable and controllable. This creates a structural paradox where businesses care about revenue, profit, and qualified pipeline, but growth teams optimise conversion rate. This blog examines why that misalignment happens and why the metric itself deserves scrutiny.

Read more: Why Enterprise AI Fails and How to Fix It

Conversion rate optimisation emerged as digital products and marketing funnels became fully measurable, allowing teams to track how users moved through landing pages, sign-up flows, checkout processes, and onboarding journeys. Instead of waiting for revenue reports or quarterly sales outcomes, teams could immediately see whether a page change increased form submissions, trial activations, or checkout completions. This visibility made conversion rate a practical optimisation target for marketing, product, and growth teams responsible for improving funnel performance.

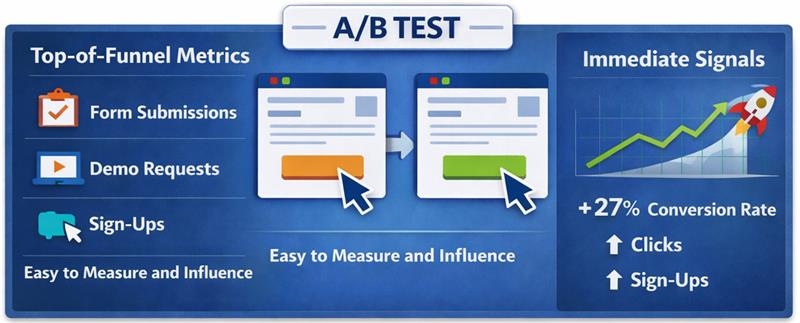

Experimentation platforms accelerated this shift. A/B testing tools allowed teams to test headlines, layouts, pricing visibility, and form structures while measuring immediate behavioural outcomes. Because experiments required a fast success metric to determine winners, conversion rate became the most reliable signal. Teams could run tests frequently, observe results within days, and report measurable improvements through conversion lifts.

Over time, this experimentation culture established conversion rate as the default optimisation metric. Landing pages, signup flows, checkout experiences, and onboarding journeys were all evaluated based on whether conversions increased. Since the metric was simple to measure and responded quickly to interface changes, growth teams built experimentation programmes around improving conversion rate, gradually treating it as a growth indicator rather than a behavioural signal within a broader revenue system.

Read more: Executive Guide to Measuring AI ROI and Payback Periods

Conversion rate is a behavioural metric that measures the proportion of users who complete a predefined action within a digital journey. It helps teams understand whether visitors respond to a page, message, or product flow, but it does not reveal whether those actions generate business value. The metric captures user behaviour inside the funnel.

Read more: How to Deploy Private LLMs Securely in Enterprises

Most organisations do not intentionally prioritise conversion rate over revenue or pipeline. The metric becomes dominant because it fits how experimentation, reporting, and team responsibilities are structured. Conversion rate responds quickly to changes, is easy to attribute to specific actions, and sits within the direct control of marketing and growth teams.

Read more: How to Deploy Private LLMs Securely in Enterprises

Higher conversions often create the appearance of growth, but the metric measures behaviour inside the funnel rather than the value created after the action occurs. A landing page may generate more demo requests, a signup flow may produce more trial users, or a checkout page may record more completed transactions, yet these improvements do not automatically translate into stronger revenue, healthier margins, or a higher-quality pipeline. When teams treat conversion rate as the primary success metric, they risk optimising user actions that do not necessarily contribute to business performance.

This gap emerges when optimisation metrics are misaligned with business outcomes. Experiments that increase conversions can attract lower-intent users, generate leads that do not qualify for sales, or encourage transactions that require heavy discounting and reduce margins. In such cases, the conversion metric improves while the economic result weakens, because the optimisation process prioritises behavioural responses instead of evaluating whether those responses create measurable financial value.

Read more: Modern AI Data Stack Architecture Explained for Enterprises

Conversion improvements often appear positive in experiment dashboards, but the metric alone does not indicate whether the underlying business outcome improved. When optimisation focuses only on increasing conversions, experiments can unintentionally attract the wrong users, reduce economic value, or distort the signals that leadership relies on to evaluate growth performance.

Read more: Personalization vs Borad UX Changes in Conversion Rate Optimization Services

Revenue and profit represent the outcomes businesses ultimately care about, yet these metrics are difficult to optimise through isolated experiments because they depend on multiple interconnected factors across the entire customer lifecycle. Unlike conversion rate, which responds immediately to interface changes, revenue and profitability emerge from a combination of pricing decisions, customer quality, retention behaviour, and operational costs that extend far beyond a single page or funnel step.

Read more: Why Some Lead Form Fields Kill Conversion

In B2B growth systems, conversions and pipeline represent very different signals, yet many teams treat them as the same indicator of demand. Metrics like demo requests or form submissions only reflect top-of-funnel activity, not real sales progress. What ultimately matters is whether those leads qualify and move into opportunities. Measuring sales-qualified pipeline instead of raw conversions aligns growth efforts with revenue potential.

| Dimension | Conversion-focused growth | Pipeline-focused growth |

| Primary metric | Form submissions, demo requests, or sign-ups recorded at the top of the funnel. | Sales-qualified opportunities that meet defined criteria and enter the pipeline. |

| Objective | Increase the number of users completing a marketing action. | Increase the number of opportunities with real purchasing potential. |

| Lead quality | Often includes low-intent or exploratory leads. | Prioritises leads that meet qualification standards. |

| Business impact | Activity increases but sales outcomes may remain unchanged. | Directly reflects potential revenue generation. |

Proxy metrics exist because experimentation requires fast signals that indicate whether a change influences user behaviour before the final business outcome becomes visible. Metrics such as click-through rate, form completion, or conversion rate provide immediate feedback about how users respond to a page, message, or flow, allowing teams to evaluate experiments within short timeframes. In this context, conversion rate functions as a proxy for potential business impact because it captures behavioural movement within the funnel, even though the actual outcome may only appear much later.

The risk emerges when proxy metrics begin replacing the business metrics they are meant to approximate. When experimentation programmes treat conversion rate as the primary success indicator, teams may optimise behavioural responses without evaluating whether those responses create economic value. Experiments can therefore produce consistent improvements in proxy metrics while revenue growth, pipeline quality, or profitability remain unchanged. Over time, excessive reliance on proxy metrics shifts optimisation away from business outcomes and toward behavioural signals that only partially represent the true performance of the growth system.

Read more: From Custom Builds to 60 Stores: BVB Media Ecommerce Platform Story

Growth programmes become misaligned when behavioural metrics sit at the top of the optimisation hierarchy instead of supporting business outcomes. A more reliable approach is to organise metrics in layers so that experimentation signals connect directly to economic impact.

In this structure, behavioural metrics help diagnose user behaviour, economic metrics connect behaviour to value creation, and business metrics represent the final outcomes the organisation ultimately cares about.

Business metrics represent the final economic results produced by the growth system. These metrics define whether the business is creating sustainable value rather than simply increasing activity within the funnel.

Economic metrics translate behavioural activity into financial impact, helping teams understand whether changes in user behaviour improve revenue potential or pipeline quality.

Behavioural metrics provide immediate feedback about how users respond to interface changes, messaging adjustments, or product flow improvements.

Read more: How to Optimize Demo Request Flows Without Disrupting Sales Infrastructure

When the objective shifts from improving conversion rate to improving business outcomes, the way experiments are designed and evaluated changes significantly. Instead of measuring success through immediate behavioural responses alone, teams begin examining whether experiments increase revenue potential, improve lead quality, or strengthen the pipeline.

Read more: How Linearloop Built a Zero Loss ERP for a Gold Refinery

Conversion rate is a useful behavioural signal, but it cannot be the final objective of a growth programme. When teams optimise only for conversions, they often increase funnel activity without improving revenue, pipeline quality, or profitability. Sustainable experimentation requires aligning behavioural metrics with economic impact and ultimately with business outcomes.

Growth teams therefore need experimentation frameworks that connect user behaviour to measurable business value. Experiments should be evaluated through revenue contribution, lead quality, and pipeline impact rather than isolated conversion lifts. If your organisation is rethinking how experimentation aligns with real growth outcomes, Linearloop helps teams design optimisation systems that link product changes, behavioural signals, and revenue impact.

Mayur Patel, Head of Delivery at Linearloop, drives seamless project execution with a strong focus on quality, collaboration, and client outcomes. With deep experience in delivery management and operational excellence, he ensures every engagement runs smoothly and creates lasting value for customers.