Mayur Patel

Mar 3, 2026

6 min read

Last updated Mar 5, 2026

When every milligram carries financial weight, manual tracking becomes a liability. VGR Gold needed more than a basic ERP. They needed a precision system that could eliminate manual gold tracking, digitise the entire refinery lifecycle, and ensure stage-wise traceability across melting, assaying, refining, casting, and recovery. The objective was to protect high-value assets through system architecture.

Linearloop built a refinery-grade ERP with a precision recovery engine at its core, designed to track residual particles, enforce controlled workflows, and provide real-time visibility across every stage. This case study breaks down how we engineered that system under tight constraints, extended platform capabilities, and delivered a zero-loss operational framework. Read on to see how we helped Gold VGR ERP move from manual processes to milligram-level control.

Read more: From Manual Coordination to Automated Logistics: Sarthitrans Case Study

VGR Gold is a Dubai-based gold refinery operating in a high-precision, multi-stage refining environment. Their workflow spans raw material intake, melting, assaying, refining, casting, and final recovery, each stage directly impacting weight, purity, and financial value. The business handles high-value material daily, where even microscopic discrepancies can translate into measurable loss.

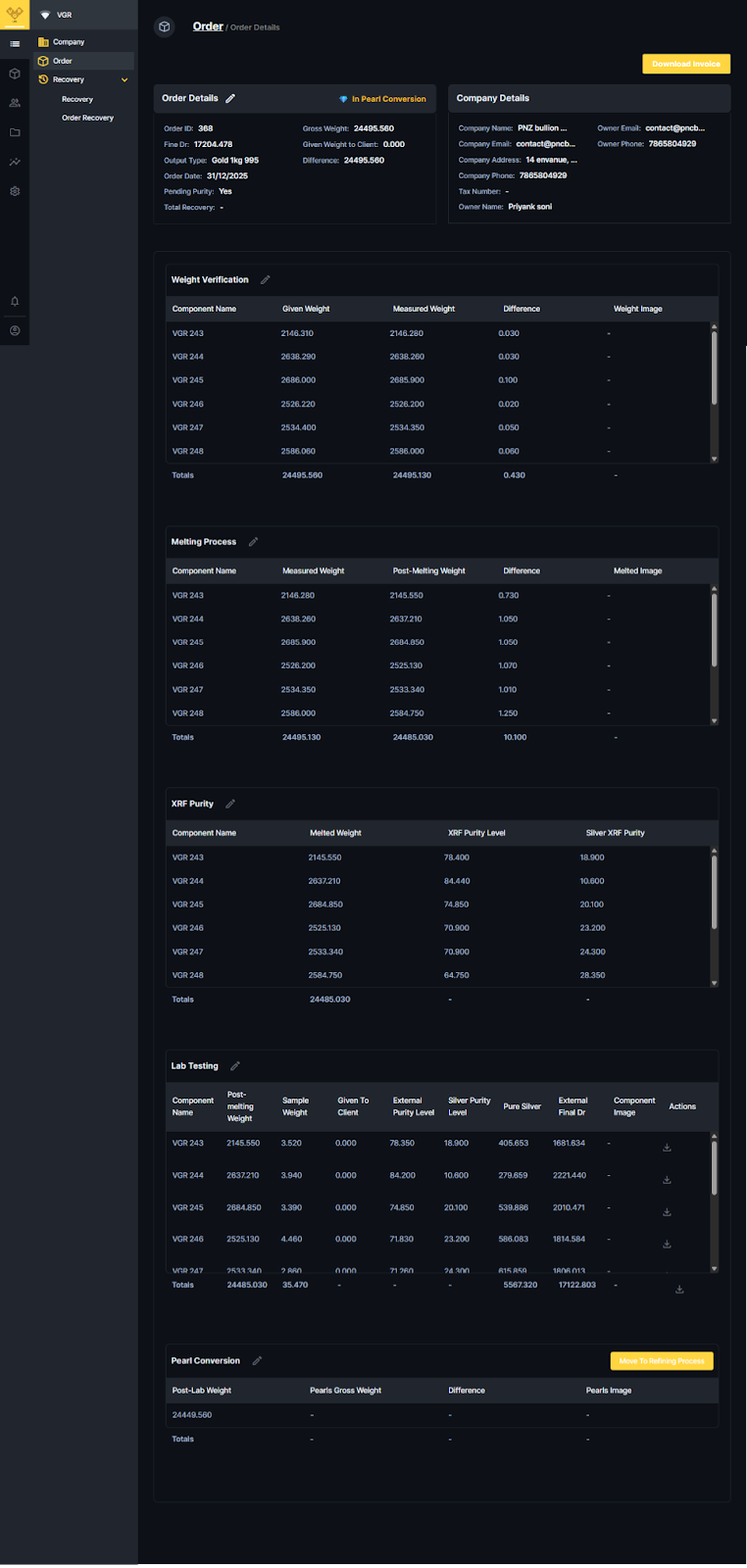

The operating environment is accuracy-critical and regulatory-sensitive. Every batch must be traceable, documented, and verifiable across stages. Process deviations are not just operational issues. Instead, they carry financial and compliance implications. VGR needed systems that matched the precision of their physical operations.

Read More: Instream Case Study: Modernizing a Legacy CRM Without Downtime

Automation was not the goal. Precision was. VGR Gold needed a system that could account for every gram moving through the refinery, eliminate blind spots between stages, and replace manual reconciliation with system-enforced accuracy. The North Star was operational control at milligram-level fidelity.

The system had to be delivered fast, perform flawlessly, and operate in a zero-error environment. Engineering decisions had to balance speed with precision, without compromising traceability or security.

Read More: How Saffro Mellow Scaled with API-First D2C Architecture

Success was defined by operational trust. VGR needed a system that removed manual dependency, enforced accuracy at every stage, and created controlled visibility across the refinery lifecycle.

The complexity sat in the logic layer, where calculation errors, workflow gaps, or data inconsistencies could directly translate into financial risk. Every engineering decision had to protect traceability and precision.

Read more: The Hidden Cost of Trade Discounts on Business Growth

The stack was selected for control, extensibility, and speed of execution. We used Directus as the operational backbone and extended it where refinery-specific logic required deeper customisation. The architecture remained lightweight but precision-focused.

| Layer | Technology | Purpose |

| Backend & CMS | Directus | Core data management layer for refinery workflows, collections, and operational logic extensions |

| Frontend | Vue.js | Built stage-wise tracking interface aligned to refinery operations |

| Database | Directus-managed database | Centralised storage for batch data, recovery calculations, and documentation mapping |

| Authentication & Access | Directus RBAC + custom logic | Enforced stage-based permissions and controlled workflow transitions |

| Version Control | GitHub | Managed source control, deployment workflows, and iterative development cycles |

The architecture was designed around one principle: trace every gram, at every stage, without breaking data integrity. Instead of layering features on top of a generic ERP, we structured the system around refinery flow, ensuring stage transitions, weight calculations, and reporting logic were deeply interconnected and scalable.

Read more: Why Data Lakes Quietly Sabotage AI Initiatives

The timeline was fixed but the scope was complex. Execution required speed without sacrificing calculation accuracy or architectural integrity. We structured delivery around tight feedback loops and disciplined iteration to maintain control under pressure.

Read more: How to Deploy Private LLMs Securely in Enterprise

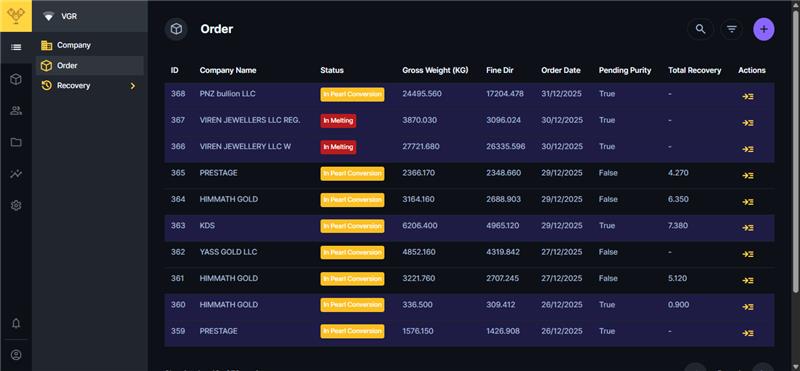

The ERP replaced fragmented manual tracking with a centralised, stage-driven system. Every refinery phase now operates within a controlled workflow where data propagates with validation.

Manual logs and spreadsheet reconciliations were eliminated. Batch entries, weight transitions, and recovery calculations are system-enforced, reducing operational gaps and dependency on cross-verification.

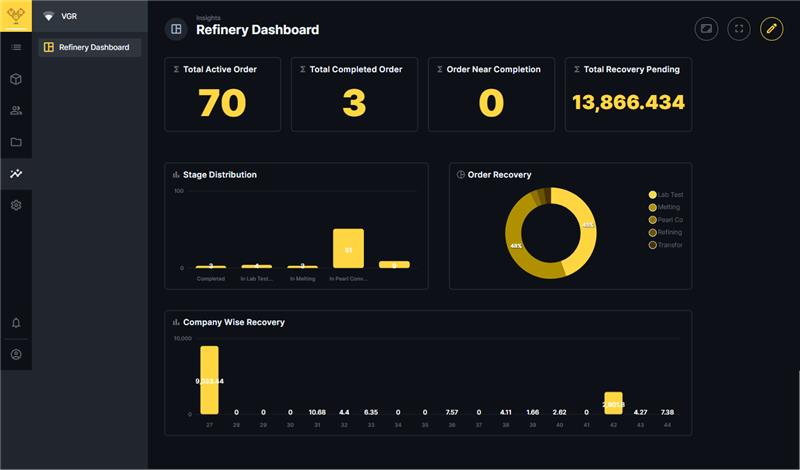

Stage-level visibility is real time. Managers can track batch progression, weight evolution, and residual quantities instantly. The recovery module calculates residual gold at each phase and updates reports dynamically, ensuring milligram-level traceability.

The platform also creates a scalable base for Phase 2:

Live metrics will follow post-launch, but structurally, the shift is clear: Faster workflows, reduced waste, stronger retention accuracy, and tighter operational control.

Read more: RAG vs Fine-Tuning: Cost, Compliance, and Scalability Explained

The leadership team validated the recovery logic, stage transitions, and reporting structure during simulation cycles. They highlighted three areas of impact:

Operational teams responded positively to the stage-aligned interface. The workflow mirrors physical refinery movement, reducing friction during adoption. Role-based controls were particularly appreciated, as they introduced structured accountability without slowing execution.

We remain actively involved post-deployment. The engagement has transitioned from build phase to optimisation and expansion. Phase 2 is already structured around controlled enhancement rather than feature expansion for its own sake.

Phase 2 roadmap includes:

GOLD VGR ERP demonstrates what happens when operational risk is addressed through system architecture. By replacing manual reconciliation with stage-aware workflows and milligram-level recovery logic, VGR moved from ledger-based tracking to a controlled, intelligent refinery platform. Precision became structural. Traceability became enforceable. High-value material handling is now backed by engineered accountability.

If your operations depend on accuracy, compliance, and zero-loss tracking, the solution is not another tool. it is architecture-first thinking. Linearloop builds systems designed for precision environments where mistakes are expensive. If you are ready to modernise critical workflows with the same level of control, let’s talk.

Mayur Patel, Head of Delivery at Linearloop, drives seamless project execution with a strong focus on quality, collaboration, and client outcomes. With deep experience in delivery management and operational excellence, he ensures every engagement runs smoothly and creates lasting value for customers.